According to Bloomberg, the company is targeting a launch window around 2027 – and the project signals a broader push into everyday, camera-enabled wearables.

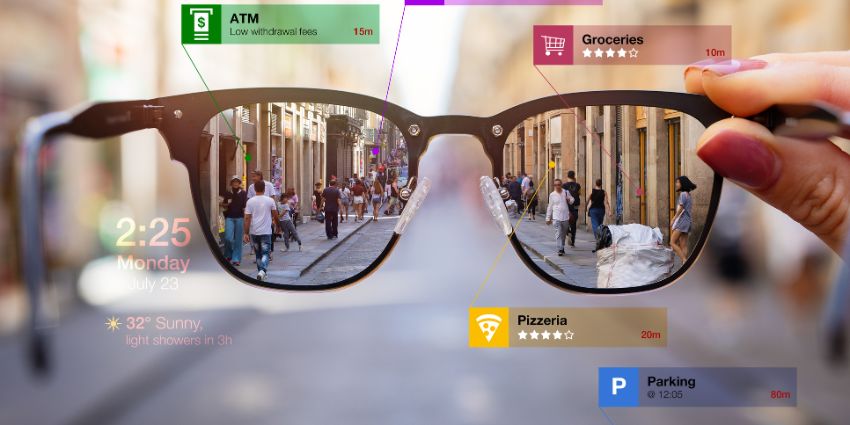

For the burgeoning wearables market, the implications are significant – Apple appears to be positioning these glasses as an always-on companion for calls, notifications, and contextual information rather than a full augmented reality headset.

Apple Pivots To Simpler Smart Glasses

For years, Apple explored multiple head-worn device concepts, including tethered AR hardware and true augmented-reality glasses.

Only one of those ideas made it to market as a mixed-reality headset in 2024.

Now the company is betting on a more practical stepping stone – lightweight, display-free smart glasses designed for everyday use.

The shift mirrors the growing popularity of camera-equipped eyewear from competitors such as Meta, which helped validate consumer demand for wearable cameras and AI assistants.

Apple’s version is expected to focus on real-world productivity and communication scenarios.

The glasses are designed to capture photos and video hands-free, manage calls and notifications, play music and audio, deliver voice-driven assistance via Siri, and sync tightly with the iPhone for editing and sharing.

For XR professionals, this hints at workflows where users can join calls, capture field updates, and receive contextual prompts without pulling out a phone.

A Broader AI Wearables Strategy

The glasses are reportedly just one piece of a wider AI hardware push that also includes camera-enabled AirPods and a wearable pendant device.

Together, these products are designed to feed visual context into Apple’s AI stack, enabling more situational awareness and hands-free interaction.

Apple is aiming to compete through premium design and tight ecosystem integration rather than first-mover advantage.

The company is developing multiple frame styles and materials while maintaining in-house control over design.

This approach may help Apple stand out against efforts from Google and Samsung Electronics, which are working with Warby Parker, while Meta has leaned on EssilorLuxottica.

How the Market Looks

The wider market momentum around AI eyewear is becoming increasingly difficult to ignore.

Devices like the Ray-Ban Meta smart glasses have demonstrated that consumers are willing to embrace camera-equipped wearables when the value proposition is clear.

Meanwhile, enterprise interest in hands-free computing is growing across sectors such as logistics, field services, healthcare, and manufacturing.

In these environments, the ability to capture live video, receive contextual prompts, or communicate without handling a device can drive measurable productivity gains.

Apple’s entry could accelerate this shift by bringing tighter mobile integration and a familiar developer ecosystem to the category.

As with the Apple Watch and AirPods, the long-term goal appears to be creating an instantly recognisable wearable category that becomes part of daily life.

Why This Matters

Apple’s wearable AI push could have long-term implications for IT leaders.

Hands-free calling and notifications could move beyond smartphones, field workers may gain real-time visual assistance, and context-aware AI could enhance productivity and meeting workflows.

If Apple succeeds in making AI wearables mainstream, the ripple effects across the UC landscape could be substantial.

Collaboration platforms may increasingly evolve toward voice-first, ambient, and camera-aware experiences.

Meetings, messaging, and task management could shift from screen-based interactions to lightweight, always-available interfaces.

Privacy And Data Concerns

The arrival of any AI-powered smart glasses is expected to reignite longstanding concerns around wearable cameras, data capture, and consent in public and workplace environments.

Early smart glasses products from Meta helped establish the category commercially, but also drew scrutiny over discreet recording capabilities.

In practice, concerns have centred on whether bystanders are adequately aware when recording is taking place, particularly in environments such as gyms, retail spaces, and workplaces where camera indicators may not always be immediately obvious.

Those concerns have already prompted policy responses in some settings.

Certain venues and organisations have explored restrictions on camera-enabled eyewear, citing risks around employee privacy, customer confidentiality, and the potential for inadvertent data capture in sensitive areas.

Apple is likely to encounter similar questions as it develops its own smart glasses platform, particularly given the emphasis on always-on camera input and AI-driven contextual awareness.

Regulatory frameworks such as GDPR further complicate deployment in sectors including healthcare, legal services, and financial institutions, where inadvertent capture of identifiable data may trigger compliance obligations.

The challenge for Apple will be balancing its established privacy positioning with the operational realities of a device designed to continuously interpret the user’s environment.

While on-device processing and visible recording indicators are expected to play a central role in its approach, industry experience suggests that transparency and consent will remain central to enterprise adoption.